India wrapped up its 4-day-long AI Impact Summit 2026 in February 2026, aiming to mark India’s position in the global AI economy, making it the first AI summit in the global south. The country’s push towards AI, however, has its perils and damaging implications for women, with non-consensual sexual AI deepfakes being a major concern at hand.

With the influx of AI-generated content, concerns have risen over the creation, replication, and dissemination of deepfakes. Non-consensual sexual deepfakes have been widely generated using various AI chatbots. According to the New York Times and the Centre for Countering Digital Hate data, Grok, the AI chatbot available on ‘X’ (formerly Twitter), was used to generate and publicly share around 1.8 million sexualised images of women by January 2026.

AI deepfakes are synthetic videos and images created using artificial intelligence. It is highly realistic fabricated content that uses deep learning models to replicate individuals’ characteristics, imitating their facial features and adapting them to any provided prompt.

To tackle the issue of AI non-consensual sexual deepfakes, experts have made a case for a multi-level accountability process that works on regulations with reference to the company providing the AI service, individual accountability and legal framework.

“In the world of AI-powered deep fakes, concepts such as consent and individual identity are being highly compromised,” said Sruthi Muraleedharan, a faculty member at Shiv Nadar University who specialises in Gender Studies, politics of identity and symbolism.

According to her, AI deepfakes are contributing to two forms of violation. Firstly, the dilution of the principle of consent of the individual involved, with regard to the content and its circulation. Second, correspondingly, the violation of an individual’s right to privacy, dignity and reputation.

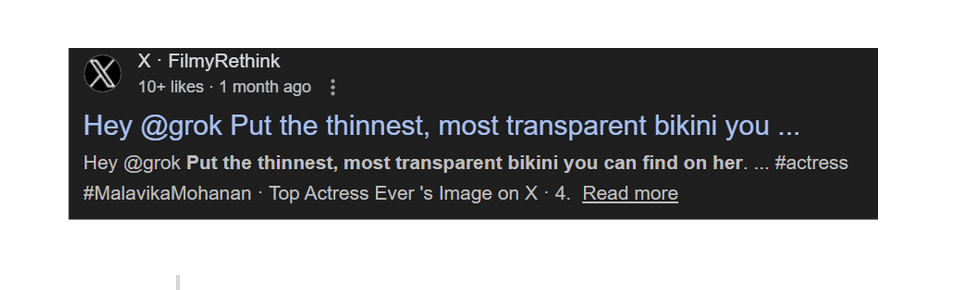

Since late December, X’s chatbot has been inundated with user requests to alter real photographs of women and children by removing their clothing, dressing them in bikinis, or positioning them in sexualised poses, triggering global outrage.

In India, actress Malavika Mohanan, was one of the many celebrities who received lewd comments asking Grok to “Put her in a bikini.”

Multiple women have pointed out their concerns over the misuse of AI. In January 2026, Seema Anand, a London-based author and storyteller, filed an FIR regarding the generation and circulation of her non-consensual AI-generated sexual content. The 63-year-old posted a video on January 16 saying the incident made her feel “physically sick”.

Legal framework and its drawbacks

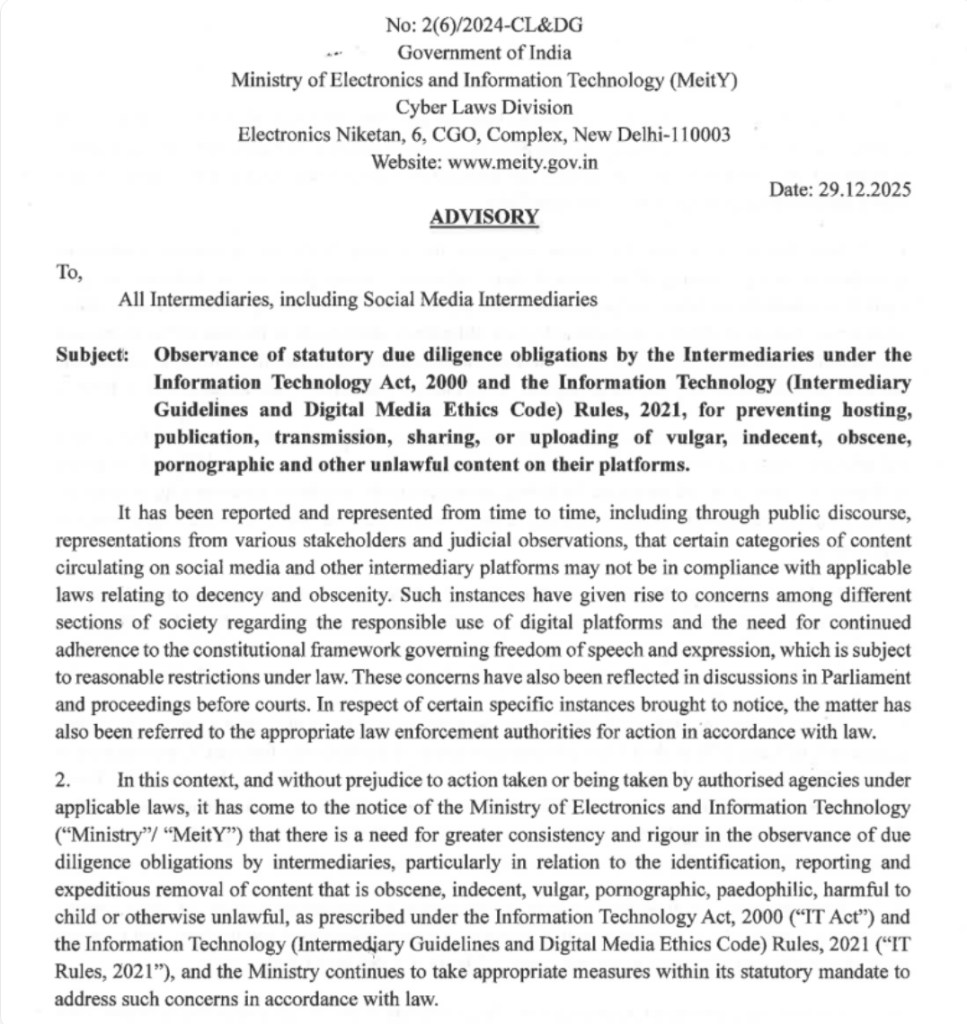

The prevalence of this issue prompted the Indian government to request action from Elon Musk in January 2026.

In the Indian legal framework, AI-related issues are addressed under the IT (Information Technology) Act, 2000 and the Digital Data Protection Act, 2023 and multiple other Indecent Representation of Women (Prohibition) Act, 1986, the Protection of Children from Sexual Offences Act, 2012, in cases involving minors, and the Young Persons (Harmful Publications) Act, 1956.

As per the latest update, image generation and editing from Grok has been restricted to premium subscribers of ‘X’. Apar Gupta, advocate and founding director of Internet Freedom Foundation, said, “The reason chatbots continue to produce non-consensual content despite bans is a structural failure in how these models are built. We cannot solve a systemic problem with reactive bans.” He added that Elon Musk’s statements follow a tired pattern of “whack-a-mole” moderation.

During the Summit, Union Minister Ashwini Vaishnav, talked about the amendment of the Information Technology (Intermediary Guidelines and Digital Media Ethics Code) Rules, which has set a three-hour deadline for social media platforms to take down AI-generated or deepfake content once it is flagged by the government or ordered by a court. Vaishnav also talked about mandating technical traceability and redefining intermediary obligation; however, the question of generation remains unanswered.

Under the Digital Data Protection Act, 2023, the circulation of non-consensual sexual content is prohibited, but generation is not. Gupta further added, “The legal obsession with ‘circulation’ lets the architects of these tools off the hook. Accountability is a shared ladder.” He stated that, “We need to evolve our laws, specifically the IT Rules, to hold platforms liable if they fail to proactively prevent the generation of such content, rather than waiting for a takedown notice.” Sruthi Muraleedharan said, “The nature of legal intervention and critical feminist legal analysis has not been to consider it (AI non-consensual sexual deepfakes) just as content which is ‘offensive’ but to bring it in the ambit of sexual abuse by employing digital forums.”

Digital footprint and gendered public access

Premium membership on X operates on a subscription model, with a basic subscription available for INR 170 per month. In January 2025, they had around 2 million subscribers, and Elon Musk said he hoped to increase that number by giving them access to Grok’s full feature set.

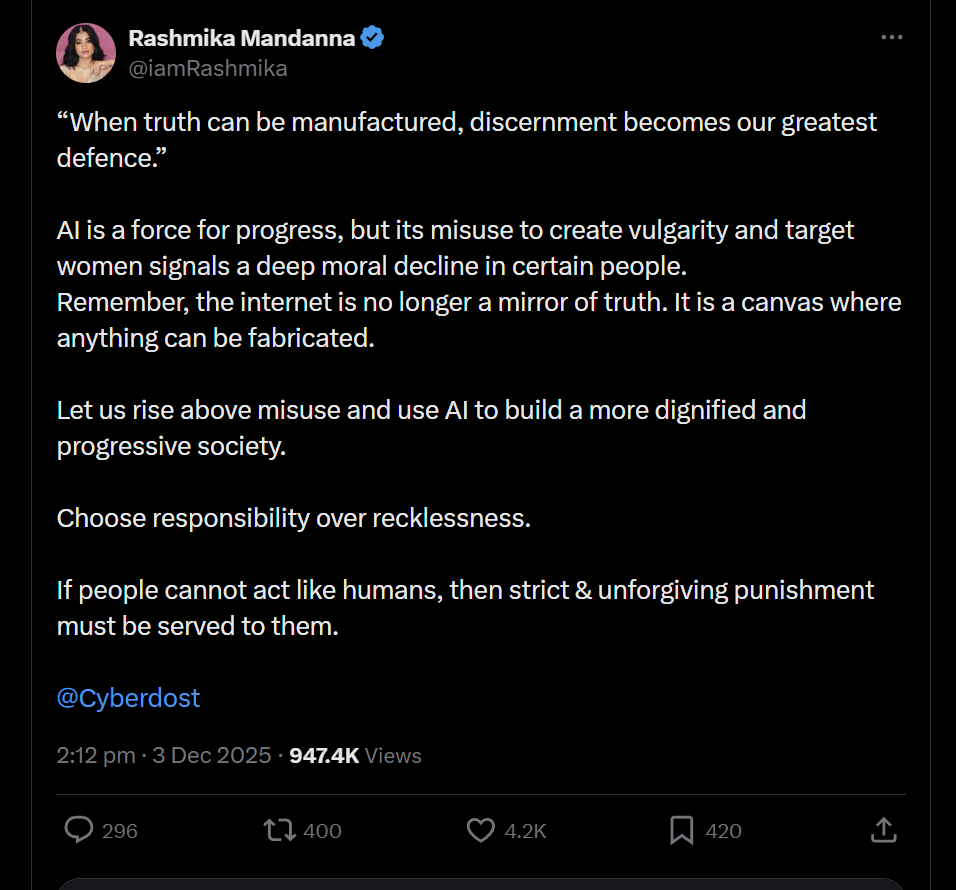

But the problem of AI non-consensual sexual deepfakes is not new. Similar outrage erupted in October 2023, when a deepfake video of actress Rashmika Mandana went viral. She took to ‘X’ and said, “AI is a force for progress, but its misuse to create vulgarity and target women signals a deep moral decline in certain people. Remember, the internet is no longer a mirror of truth. It is a canvas where anything can be fabricated.”

Many public figures, such as Keerthy Suresh and Girija Oak Godbole, have released similar statements raising concerns about the misuse of AI, prompting the government to take action.

In response to queries seeking clarity on the challenges clouding the investigation of deepfakes, the office of SP Cybercrime, Lucknow, said that identifying and tracing the original source of deepfake generation is challenging. “Burner emails, fake accounts and VPNs make identifying the real person behind the content extremely difficult,” read a written statement issued by the office of SP cybercrime in response to this reporter’s query.

Rati Foundation, an NGO/organisation offering socio-legal support for women and children, and Tattle, a community of technologists, researchers and artists, works towards responsible AI, media literacy and web scraping, released a report on November 3, 2025, on AI’s role in online harassment. It points to a shift in the nature of agency when AI-generated content is used in online harassment. The report states, “In nearly all cases, the AI-generated content relies on photographs the victim has posted on their public profiles—the only ‘agency’ the victims had in causing the attack. This is to say that the victims had little to no agency in the content that contributed to their harassment.”

The idea of digital footprint and gendered public access are used as tools to cyber harass women. Muraleedharan says that there is a realisation that even the basic sense of control over one’s digital presence can be compromised.

The question of problem-solving

There remains a debate about the best way to tackle the issue of AI deepfake non-consensual sexual content. Regardless, countries worldwide have taken measures to curb the spread of the AI deepfake crisis. South Korea had criminalised deepfake pornography consumption, which was a step against digital sex crimes, imposing stricter penalties for production, distribution and viewing. Denmark has prepared amendments to national copyright rules to grant people more control over their voices and images in AI-generated deepfakes.

The issue of deepfakes and its gendered impact deepens the digital divide, as the presence of women in physical public spaces is threatened with the use of gender based violence, such as the misuse of AI to create deepfakes.

Gender Studies expert, Sruthi Muraleedharan states, “Technology is not neutral of social hierarchies that exist. Therefore, patriarchal and heteronormative assumptions along with intersectional dimensions of majoritarian politics can definitely inform the manner in which marginal groups are targeted resulting in a more expanded understanding of gender based violence.” She cites the example of Sulli deals, which was a website hosted by GitHub that had taken publicly available pictures of Indian Muslim women and created profiles, describing the women as “deals of the day.”

Gupta says, “If a society is deeply patriarchal, its users will naturally use new tools like AI to reinforce that hierarchy through ‘image-based sexual abuse.’ If we don’t bake human rights and gender-sensitivity into the code from day one, the technology will inherently favour the powerful and target the marginalised.”

About the author(s)

Ishita Yadav is a freelance journalist and photographer based in Delhi. She is currently pursuing her Master’s degree in Convergent Journalism at AJK MCRC, Jamia Millia Islamia. Her work focuses on human rights and gender.

This article highlights one of the most dangerous sides of AI that many people ignore. Deepfakes are not just “technology experiments” — they are tools increasingly used to violate consent, dignity, and women’s safety online. The emotional and psychological damage caused by non-consensual AI content is very real. Stronger laws, platform accountability, and ethical AI development are urgently needed.

AI should empower society, not become another weapon for misogyny. The rise of deepfake abuse shows how technology without accountability can amplify violence against women. Consent cannot be optional in the digital age. Platforms and governments must stop treating these incidents as minor online issues and recognise them as serious forms of abuse.